How Businesses Use Proxies for Legitimate Web Data Collection

Price comparison, SEO, brand protection — and how to do it without breaking rules.

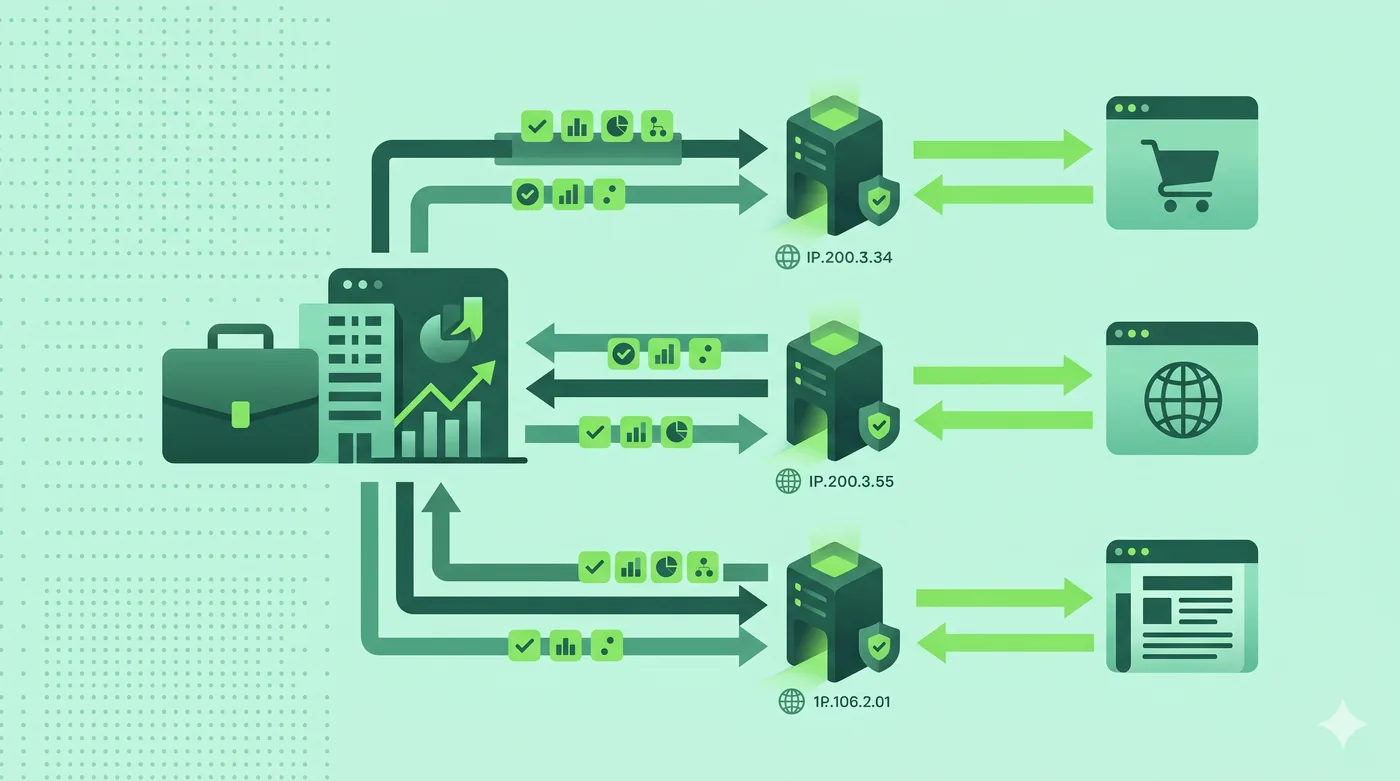

Businesses use proxies to collect publicly available data — prices, search rankings, stock levels, ad placements — without overwhelming source servers or revealing internal infrastructure. Done responsibly, that means respecting robots.txt, rate-limiting, and terms of service.

Key takeaways

- Proxies enable data collection at scale without single-IP rate limits.

- Legitimate use cases include price tracking, SEO, ad verification, and brand monitoring.

- Always honour robots.txt and the target site’s ToS.

- Throttle requests; don’t cause measurable load on the source.

Why proxies, not just one server?

Most websites limit how many requests come from a single IP per minute. Spreading requests across a proxy pool keeps the rate from any single IP polite while still allowing meaningful coverage.

Common legitimate use cases

E-commerce companies track competitor prices on hundreds of SKUs. Marketing teams verify that their ads display correctly across regions. SEO teams measure search rankings from different locations. Brand protection teams scan for trademark abuse on marketplaces.

Doing it ethically

Read robots.txt and the site’s terms of service before scraping. Respect Crawl-delay. Identify your bot in the User-Agent. If a site has a public API, prefer it. If a site explicitly forbids scraping, find another data source.

Operational tips

Cache aggressively to reduce repeat requests. Use exponential back-off on errors. Log every request so you can prove your behaviour was reasonable if questioned.

Frequently asked questions

Is web scraping legal?

It depends on what you scrape, where, and how. Public data with respectful access is usually fine; scraping behind a login or against ToS may not be. Get legal advice for anything commercial.

How many proxies do I need?

Enough that no single IP exceeds the source site’s polite-rate threshold. For most projects, that is dozens, not thousands.

Can I use a VPN instead?

VPNs typically only give you one IP at a time. For scraping at scale, a proxy pool is the right tool.

Sources & further reading

We cite primary sources whenever possible. Below is the reference list relevant to this category. Specific facts in this article are checked against vendor documentation and the sources we link to inline.

Related guides

Forward Proxy vs Reverse Proxy: What’s the Difference?

Same word, two completely different jobs. Here’s a clean mental model.

Read article → Proxy ServersResidential vs Datacenter Proxies: Honest Comparison

What each one is, what they’re actually used for, and the ethical lines that matter.

Read article → Proxy ServersSOCKS5 vs HTTP Proxies: Use Cases for Developers

When to reach for SOCKS5 and when an HTTP proxy is the better tool.

Read article →